Set Test Goals Select Response Variables Select Factors

A clear goal when designing a test is vital. Why are you conducting a test? What questions do you want to answer? Your goals will answer these questions and drive your design and analysis options. Establishing test goals is a collaborative process that occurs early in the test planning phase and during which requirements representatives, program managers, users, testers, and subject matter experts should come to agree. Several of the most common types of test objectives and goals are described below.

Common Test Goals

Compare Multiple Systems

Tests are often designed to compare two or more systems or variants of the same system across multiple conditions. These tests aim to quantify and test for statistically significant differences in performance among systems that are operating under similar conditions. For example, whether fuel type (bio-fuels vs. fossil fuels) impacts the self-sealing properties of fuel tanks is a comparison question. In this example, fuel tanks holding different mixtures of fuel were fired on and fuel leakage was compared.

A variety of test designs are useful in comparing multiple systems. Matched designs are commonly employed to compare multiple systems. More information on these designs can be found here.

For example, whether fuel type (bio-fuels vs. fossil fuels) impacts the self-sealing properties of fuel tanks is a comparison question. In this example, fuel tanks holding different mixtures of fuel were fired on and fuel leakage was compared.

A variety of test designs are useful in comparing multiple systems. Matched designs are commonly employed to compare multiple systems. More information on these designs can be found here.

Screen for Influential Factors

Screening tests are designed to help identify key factors by screening out non-influential factors. They are especially useful at the outset of testing when we may know little about how the system responds to various factors. They allow us to narrow the field of possible factors prior to more costly testing. Testers conducting a screening test would generate a list all potential factors that are thought to affect the primary response, design and execute a simple test of these factors, and identify the factors that have the largest impact on the response. Further testing in a sequential test program would focus only on these previously identified influential factors. Factorial and fractional-factorial designs are often used to screen a moderate number of factors, while optimal designs and orthogonal arrays can be helpful with extremely large numbers of factors and levels. More information on common screening designs can be found here.Characterize across Conditions

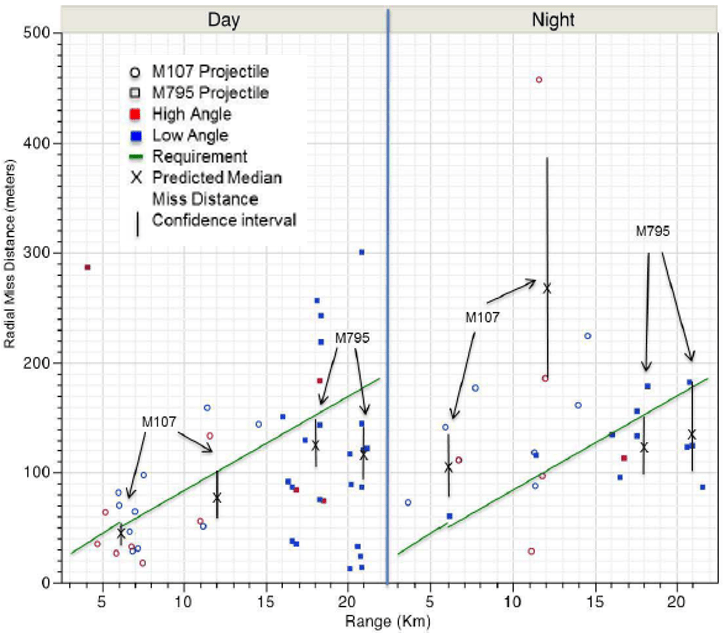

Characterization tests describe performance at each level of the designs several factors (i.e., across the operational envelope). They are some of the most important and common goals for operational testing. Characterization of a system helps us determine whether a system meets requirements across a variety of operational conditions. They do so by generating precise mathematical models of performance based on test data.

Many kinds of test designs are used for characterization, including factorial, fractional-factorial, response surface, and optimal test designs. The appropriate test depends on the number of factors and factor levels in the design as well as the expected variation in performance across these factors and levels. You can learn more about these specific designs here.

Characterization of a system helps us determine whether a system meets requirements across a variety of operational conditions. They do so by generating precise mathematical models of performance based on test data.

Many kinds of test designs are used for characterization, including factorial, fractional-factorial, response surface, and optimal test designs. The appropriate test depends on the number of factors and factor levels in the design as well as the expected variation in performance across these factors and levels. You can learn more about these specific designs here.

Optimize Performance

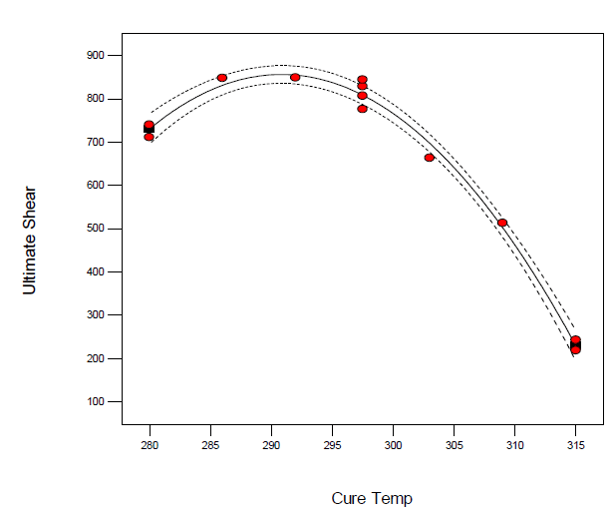

Optimization tests seek to find the combination of controllable factors or conditions that lead to optimal test outcomes (i.e., maximize desirable or minimize undesirable outcomes). For example, in the construction of polymers, an optimization test could identify at which curing temperature polymer shear strength is maximized. These tests are extremely useful in system design and manufacturing, as well as in the development of tactics, techniques, and procedures (TTPs). You can learn about common designs employed in optimization here.

Optimization tests seek to find the combination of controllable factors or conditions that lead to optimal test outcomes (i.e., maximize desirable or minimize undesirable outcomes). For example, in the construction of polymers, an optimization test could identify at which curing temperature polymer shear strength is maximized. These tests are extremely useful in system design and manufacturing, as well as in the development of tactics, techniques, and procedures (TTPs). You can learn about common designs employed in optimization here.