Set Test Goals Select Response Variables Select Factors

Factors are the independent variables that are expected to impact test outcomes. Factors (e.g., Weather, System Type) have varying levels, which are specific values or conditions they take on (e.g., Rainy/Dry, Legacy/New). A good test design controls or manipulates these levels to assess their impact on the response variables. Selecting which factors to account for in your test often begins with generating a large, nearly exhaustive list of factors and then proceeding to narrowing the scope of the test. The following practices and tools can help make this task more manageable.

Best Practices & tools for Selecting & Managing Factors

Brainstorm all potential factors that could affect test outcomes: Content experts and individuals who may have experience with similar systems can be especially helpful at this stage, as can tools such as the Fishbone Diagram.

Prioritize the factors: A first step in reducing the number of factors you will test is to identify quality factors. It can help to consider:

Prioritize the factors: A first step in reducing the number of factors you will test is to identify quality factors. It can help to consider:

1) The ease of control and cost of controlling or varying a factor.

2) The magnitude of impact the factor is expected to have on the test outcome.

3) The likelihood that particular conditions will actually occur.

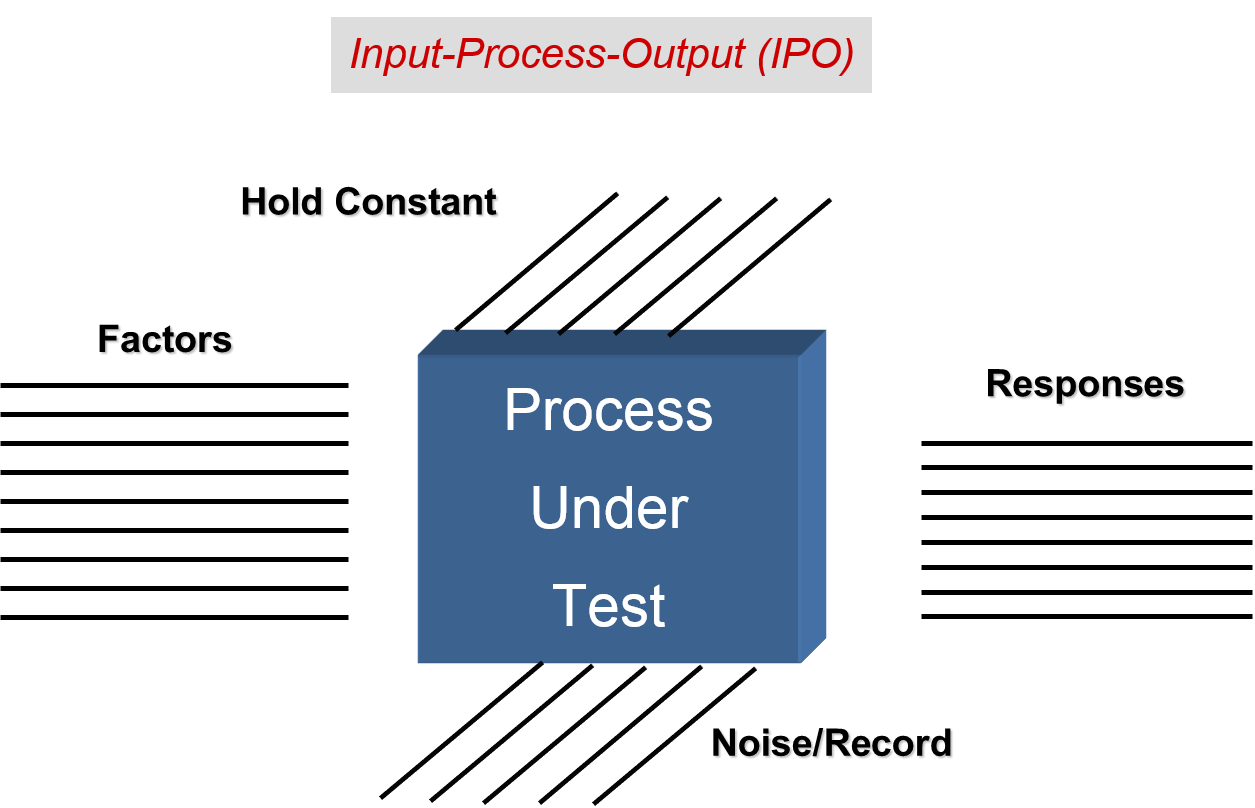

Use existing information: Draw on previous test data and content experts' knowledge whenever possible to help select the most important factors. Eliminate low priority factors: Non-influential and unlikely factors can be eliminated from the design. It is important to keep track of the factors or individual levels you leave out to aid in assessing test adequacy. Manage selected factors: Several options exist concerning what to do with the factors you have chosen to include in your test. These include:Strategically Vary: Varying the levels of the factor provides the most information and should be the default choice if you are unsure how a factor impacts test outcomes. For example, if it is not clear how a system will perform at night versus during the day, varying the levels of this factor by conducting some test runs during the day and others at night will provide this information. Test resource constraints can limit the degree to which variation is possible, however, so the strategic selection of factors is critical. For example, it may be unreasonable to secure a team of operators for the night runs, or in another case, impractical to move the test from a verdant to a desert location.

Hold Constant: Holding a factor constant means the testers do not allow the factor levels to vary throughout test runs. If, for example, it is unreasonable to conduct test runs at night, all test runs can be conducted during the day, thus holding the factor constant at the "day" level. Using this management strategy limits the conclusions testers can make about the system's performance to the one condition that was tested. It may be possible to infer that the system will perform similarly at other levels, but this is a generalization and requires additional information to limit uncertainty.

Record: This management strategy allows the factor to vary uncontrollably, but records variation for statistical control. If the evaluators chose not to vary the factor of altitude and conduct a flight test at 5,000 ft., 10,000 ft., and 15,000 ft., they might instead keep track of the aircraft's altitude at all times throughout the test.

If the evaluators chose not to vary the factor of night/day, they might instead keep track of illumination and temperature throughout the test. In this case, it is important for the evaluators to consider what it is about night versus day that they expect to impact test outcomes, and to record that thing.

Test outcomes can then be modeled, taking into account any impact this variation in altitude may have had on the response variables.

Recording factors does not guarantee that all levels of interest will be observed, and failing to restrict levels can increase test variability and negatively impact the primary objective. However, allowing such variation can also increase operational realism. Nuisance factors that cause variation in test outcomes but are not of primary interest (as opposed to treatment factors) are often recorded if they cannot be held constant or eliminated from the design.

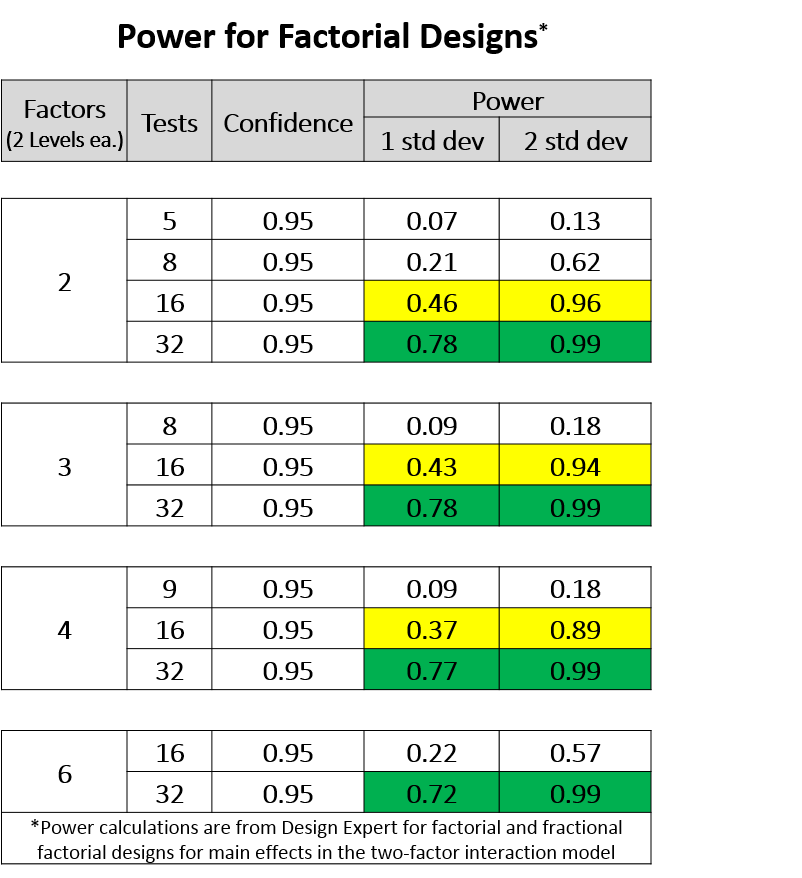

The input-process-output diagram is a tool that can help you conceptualize how factors impact outcomes and track your management decisions. Assess the impact of factor selection and management on your design: The choices you make regarding factor management will affect the design, analysis, and conclusions that can be drawn from your data. It is a good idea to involve statistical expertise even at the earliest stages of test design. Evaluators are often concerned with how the inclusion and management of factors in a test will affect test size and cost. Contrary to common assumptions, adding or removing factors often does not change the required test size (See Table 1).

You can follow these links to learn more about how factor management schemes impact the quality of your design and possible analyses.

Table 1

Assess the impact of factor selection and management on your design: The choices you make regarding factor management will affect the design, analysis, and conclusions that can be drawn from your data. It is a good idea to involve statistical expertise even at the earliest stages of test design. Evaluators are often concerned with how the inclusion and management of factors in a test will affect test size and cost. Contrary to common assumptions, adding or removing factors often does not change the required test size (See Table 1).

You can follow these links to learn more about how factor management schemes impact the quality of your design and possible analyses.

Table 1 Once you have selected factors, you are ready to move on and construct a test design.

Once you have selected factors, you are ready to move on and construct a test design.