Set Test Goals Select Response Variables Select Factors Plan a Reliability Test Plan a Survey

No system has a perfect track record. Thus, even if a system is capable of performing a task, it is important to determine how often it does so in order to describe the quality of the system. Reliability is the ability of an item to perform a required function under given environmental and operating conditions and for a stated period of time. Essentially, reliability is asking about a system's continued quality performance, where it can be improved, and, ultimately, whether it should be accepted or rejected. Reliability is an essential component in the assessment of operational suitability and ensures that systems are able to accomplish their missions when needed. Within the defense systems acquisition process, it is important that any system be demonstrated as reliable. Whereas there are several different kinds of reliability tests (e.g., Reliability life tests, accelerated life tests, and reliability assurance tests), this section focuses primarily on conducting reliability demonstration tests. There are a number of differences between ordinary system tests and reliability tests (e.g., sampling techniques, unique response variables) that make reliability testing a special case. Here, we will review the steps in planning and conducting a test to demonstrate a system's reliability.

Reliability Test Goals

Reliability gets to the core of whether or not a system should be acquired and asks "Does this system fail consistently enough that we should reject it?" Or, "Given this system is capable of performing its intended function, does it do so consistently enough to warrant purchasing and investing further in it?" Typically, the goal of a reliability test is to ensure that reliability is higher than a required minimum reliability, or that the system meets a set standard. This standard is often established in requirements documentation provided to the evaluators. In other types of reliability tests, the goal can be to indicate how costly it is to own and operate the system by revealing logistical support needs, or how often the system may need maintenance and repairs.

Reliability Response Variables and Requirements

Reliability test response variables are often unique in that they are measured in terms of the probability of failure or success or, alternatively, the amount of operation between failures. Often, reliability response measures are stated in the same terms or units as the reliability requirements they are meant to meet. Probability of Success/Failure: Probabilistic measures of reliability are computed from categorical responses in the form of success or failure. This could be the proportion of failures for trials such as shots fired or targets detected, or the success or failure of an entire mission. This measure of reliability is most common for single use systems (e.g., ammunition).

Duration: Reliability is also often measured as the amount of operation (e.g., miles driven, hours operated) before failure or between failures. This measure of reliability is often expressed as Mean Time Between Failures (MTBF). For example, a howitzer may be required to demonstrate a MTBF of 62.5 hours. In contrast to single use systems, MTBF is common for repairable systems, which comprise a majority of the systems tested for the Department of Defense.

Converting Reliability between Probabilistic Mission Duration and Mean Time Between Failures

It is common to convert probabilistic reliability requirements to MTBF. For example, the 62.5 MTBF mentioned above could also be expressed as a 75% probability of completing an 18-hour mission without failure. This conversion is explained and equation provided in the linked page along with a discussion of the validity of this approach.Considerations for Measuring Reliability

Measuring success or failure, or the extent of operation between failures, in order to determine reliability is complicated by the fact that evaluators must determine what mission failure or accomplishment is exactly. Especially in operational testing of complex systems, this requires thinking about the potentially multiple functions a system must perform to accomplish mission tasks. In the planning of an operational mission reliability test, evaluators define mission essential functions (e.g., a tank must move, shoot, & communicate) that are required for a successful mission. This allows them to measure incidents that affect mission accomplishment and to determine mission success or failure.Reliability Factors

In defense testing, evaluators are often most concerned with mission reliability, which takes into account the intended operational mission, operational environment (desert, mountain, littoral, etc.), and mission requirements. Thus, a system is reliable only if it operates consistently and successfully across the varying levels of these factors. Other types of reliability testing such as reliability life tests or accelerated life tests seeks to identify how various factors impact reliability. In these tests, evaluators identify failure modes (i.e., functions and conditions associated with greater failure rates). In addition to mission level failures like complete system aborts, evaluators might also have logistic-level concerns and record events that require system support (e.g., repairs). In this sense, support personnel can be a highly influential factor contributing to mission failure or accomplishment. In reality, this task often leads to system improvements, especially when a system is relatively new, and evaluators can track reliability growth over a system's development, though the current discussion is limited to one-time assessments of reliability.

Reliability Demonstration Test Design

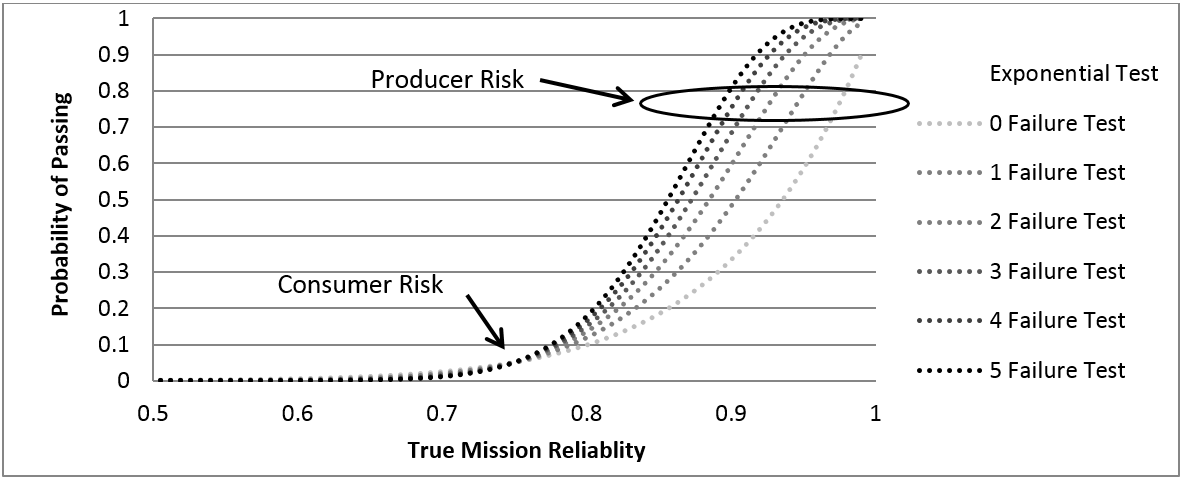

Assessing systems for reliability introduces some unique design aspects. Often, evaluators need to determine the amount of test exposure necessary to confidently demonstrate how a system performs in relation to some standard or requirement. This is a matter of sample or test size, which often involves considerations of the number of systems involved in the test along with the number of shots fired, miles driven, or time in the test (e.g., 1000 operating hours). The more test exposure, the more confident evaluators can be about the system's true reliability. Acceptance Sampling for Probabilistic Reliability: To determine the size of test necessary to demonstrate reliability in terms of the probability of success or failure, evaluators employ acceptance sampling plans. Acceptance sampling plans determine the number of tests that are necessary to guarantee a threshold level of performance. That is, they inform the evaluator how many systems, hours, etc., are needed as well as how many failures are allowed in order to meet the required reliability. When using a sampling framework, the evaluator tests a small number of system units in order to make statements about the performance of all systems of that type. The smaller proportion should be representative of all systems (i.e., randomly selected), as the demonstrated reliability of this sample determines whether the system as a whole (i.e., the program), rather than only the individual sampled units, is accepted or rejected. Larger samples give greater precision in differentiating between good and bad systems, and fewer acceptable failures increase the probability of rejecting a system. Operating Characteristic Curves: The Operating Characteristic (OC) Curve is the primary tool for displaying and investigating the properties of acceptance sampling plans. OC curves plot the the probability of accepting the system against the actual reliability or failure rate of the system at various test sizes and acceptability criteria (i.e., failures allowed). Central to the development of these curves is the balancing of Consumer Risk and Producer Risk. Consumer Risk is defined as the probability that an unreliable system will be accepted, whereas Producer Risk is the probability that a reliable system will be rejected. OC Curves are used primarily to determine the test size necessary to confidently demonstrate reliability.

Construct an Operating Characteristic Curve

The specific methods used to construct operating characteristic curves vary depending on whether reliability is stated in terms of success/failure (probabilistic) or duration (e.g., MTBF). There are also several tools and applications available for constructing OC Curves and generating acceptance sampling plans.Reliability Test Execution

Overall system reliability data are often collected during the operational testing phase when systems are operated in realistic field conditions. It is also possible to collect reliability data for individual components and some aspects of the system throughout developmental testing. It is important, however, to test for reliability across diverse conditions that reflect those of an actual mission.