Construct Test Design Assess Test Adequacy Execute the Test

Now that you have carefully considered test design aspects such as response variables, factor selection, statistical modeling, and other elements of a rigorous, informative, and defensible design, you are probably eager to let the rubber meet the road and begin to answer those questions you began with. However, before executing your design it is well worth the effort to dedicate some additional forethought and planning to the nuts and bolts of test execution, as well as in formulating contingency plans. What happens if the operators err? What do we do about component failures? What if the weather disagrees with our plans to test in dry conditions that day? All too often, well thought-out designs fall prey to the complications and unforeseen events of testing and evaluation in a complex and dynamic world. Implementing best practices for executing the design and anticipating a few common hang-ups will give your test a fighting chance and can save you a headache in the long run.

Best Practices for test execution

Develop protocol & training: Perhaps as important as selecting response variables is determining such matters as who will be in charge of measuring those variables, who will operate the system, and how evaluators will know in what order test events are to occur. It is important to provide detailed and clear instructions in order to prepare your personnel to follow the test plan. Additionally, proper training helps to avoid unnecessary complications and mitigate information and resource loss when complications do occur. It is often a good idea to test the adequacy of any training before assessing the adequacy of the system itself. Consider who is involved in the test: Quality results rely not only on the merits of your design, but also on the faithful implementation of a quality design. It is often worth while to consider how humans might impact results, and to design your test with these considerations in mind. This involves thinking of the factors beyond the system or device being tested, such as who is involved in conducting the test. System operators and even test personnel add additional sources of variability to the test, some of which can be avoided. Be sure to consider the abilities and constraints of the humans involved in testing. Some common constraints that interfere with our ability to attribute system performance to factors of interest include learning effects (improved performance after continued operation/testing) and fatigue (decreased performance after continued operation/testing). These will impact system performance, though the operator rather than the system is responsible. Furthermore, different operators running different systems can confound results if the operators are not equivalent in skill, training, or other relevant characteristics. It is best to randomly assign operators to systems in order to create homogenous operator groups. Tactics and Doctrine: When presenting your protocol and training to operators, it is helpful to consider how these correspond or conflict with any established routines and behaviors. Be mindful of the degree to which you might be asking operators to use a system or conduct a mission in a way that runs counter to existing tactics and doctrine. Test-run order: Stick as closely as possible to the preplanned order in which each test run is conducted. Applying a randomization scheme to the order of test runs helps to meet an essential assumption of many statistical analyses. This order should be specified beforehand in the design phase and is typically generated by computer software (e.g., JMP, MiniTab). The randomization order can be modified to fit operational realism as long as this does not result in a systematic pattern (e.g., all Day runs are conducted first, certain factor levels are not combined). Collect and manage data carefully: The practical side of selecting response variables is determining how those variables are recorded and stored. In addition to specifying whether a human observes and writes down a test outcome, or whether data is collected from sensors, for example, it is beneficial to lay out a systematic and organized format in which data will be stored. There are several data formats, but some are more common and amenable to analysis than others. Planning to collect and store your data in a specific manner before the fact can save time in the long run.

responding to deviations from the plan

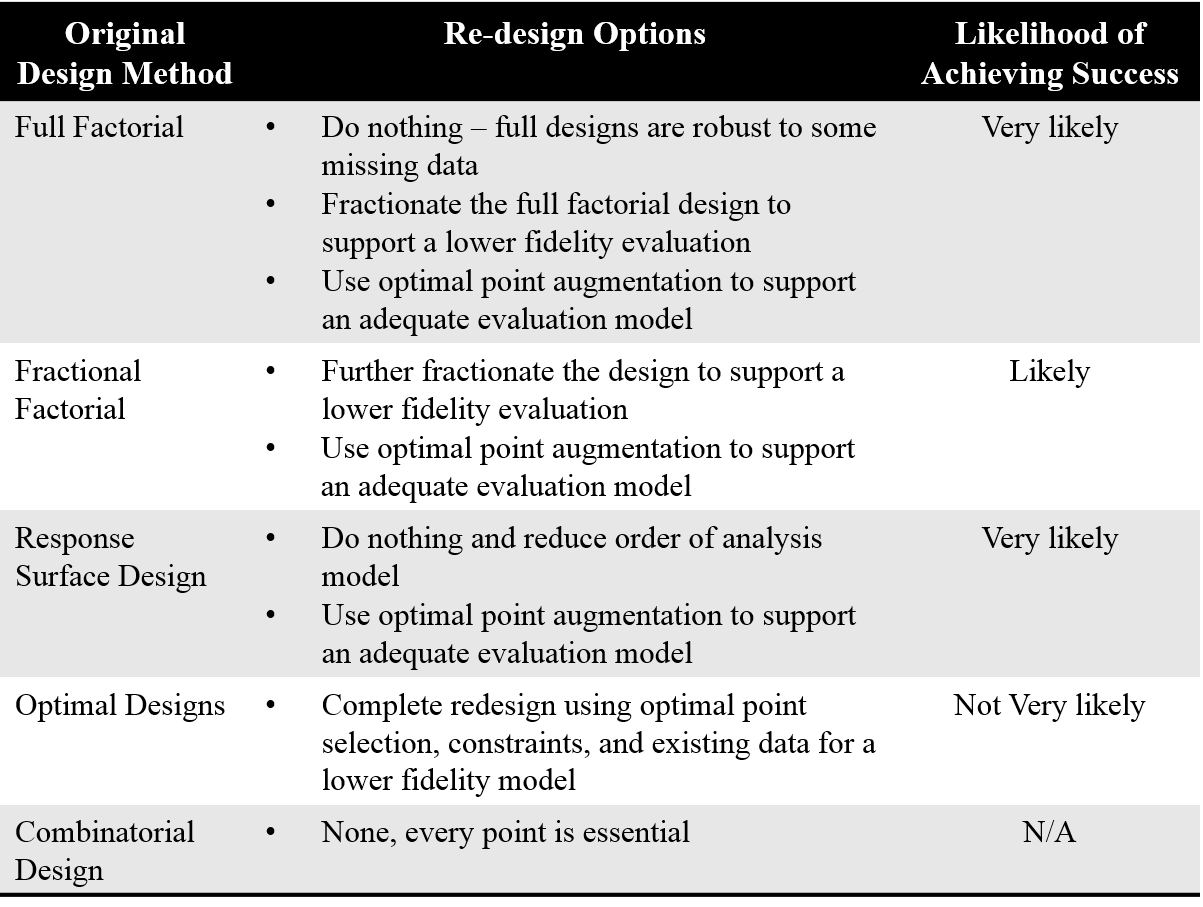

Despite best efforts, events often occur during testing that cause deviations from the test plan. This results in a reduced test size due to missing data from canceled or failed runs. It is important to assess whether these data are systematically or randomly missing, as this must be considered during analyses. There are tools to help you determine which is the case. In addition to whether or not data are missing at random, the amount of missing data and the type of original design can help you decide how to proceed. If enough data are missing, it may be necessary to re-design and collect additional data. Test redesign options are partially determined by the original design. These options as well as the likelihood that the re-design will succeed in meeting the original information goals are listed in the table below. You can learn more about these techniques in the Construct Test Design section.

Jamming System Case Study

Of course, ideal results come about when a good design plan is followed. However, complications will undoubtedly occur and evaluators will have to decide how to respond to their unique circumstances. Learning about what can be done in response to complications and the resulting consequences for the design can aid is these decisions. This case study of a jamming system presents several complications that the program manager anticipated, as well as possible design modifications and corresponding loss of information.